Increase data usage with a simple data catalog

The modern world is built on data, but data teams need an organized way to work efficiently with it. Lentiq provides a data management system that's heaven for data teams that want to know what they are working with.

Teams can work on independent projects by using only the data they need. No extras, because less is more. When it comes to sharing, that's where we shine: data governance. You can apply strict rules when sharing data with other teams or departments, if needed.

Making sense of data is a hard enough job, but organizing it to be put to good use should be as easy as possible. Now, it is. With our Data Management system, teams can annotate files and tables, enrich data with context and document data and metadata. Allow your team to know exactly what they are working with.

Got a project? Document files and tables and make sense of them.

Understand the data used in a project by adding metadata information, adding notes to data and attaching notebooks with recently made edits. Then, share the essential insights discovered with the team.

A Data Catalog to rule them all. Designed for datasets.

Lentiq's data catalog is the ultimate tool for making datasets understandable at the first view by adding tags and categories. Everything is beautifully organized. No questions.

Subscribe to datasets and stay updated.

Our data management feature allows users to easily subscribe to a dataset, regardless of the cloud it’s hosted on. As you get notified of every change in the data set, you can be confident that the analysis is synced with the freshest data. Data exploration has never been more accurate.

We believe in freedom of data, but we also know the importance of restrictions. You have them both. Teams can work strictly with the data they need, while we allow restrictions to sensitive data so only project owners can manipulate it. This is what we call true freedom.

Freedom to choose

Our data catalog is built to allow different stages of access for different users. A data pool project owner can secure highly sensitive data found in a data pool and make it visible only to users working on that particular project. Top notch privacy and security for true peace of mind.

Encourage data exploration

You can store data relevant for all departments and make it available for the entire team, regardless of the project. With easy access to data, teams collaborate and work together more efficiently.

Care-free experimentation

By documenting data, multiple teams and departments can use data for different projects without affecting the original source data. Teams can experiment, analyze and play around with data, but no damage is done.

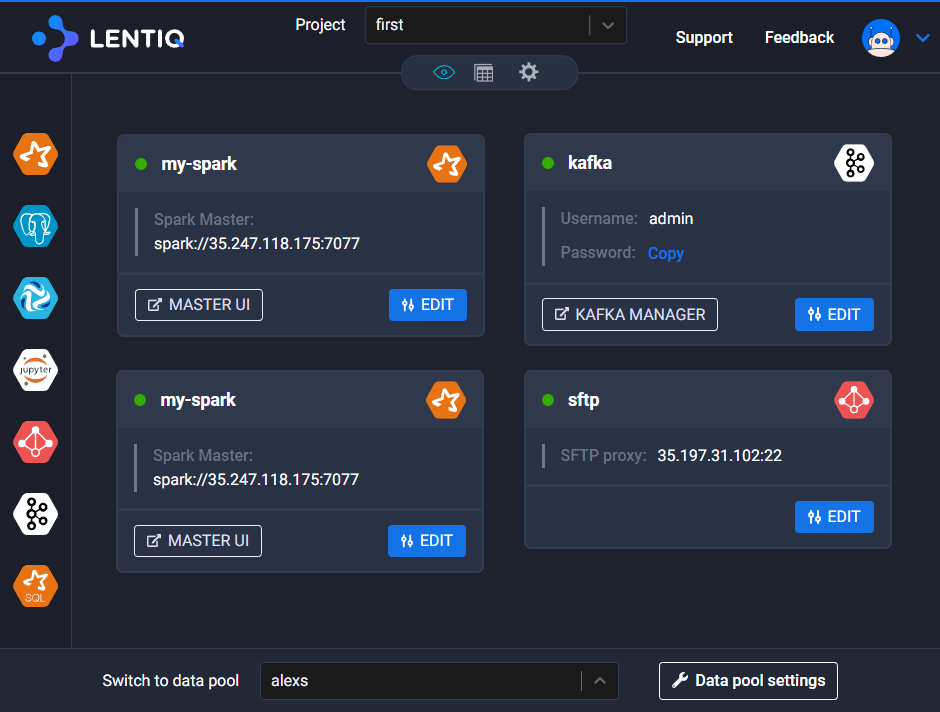

Whatever the cloud, whatever the project, data and tables are managed the same way. By using Lentiq's cross-cloud capabilities of data pools, teams work together easily and control everything in a few clicks. Have a look.

Regardless of the BI tools data teams use, if they know how to speak SQL, you can connect them to the data stored in Lentiq. By using our JDBC connector and Spark SQL engine, you can have interactive data access.